"The beauty of music is in the ear of the beholder", we are always told. Or perhaps we are not always told this, but I imagine that we should be told that. Because while I like a lot of music that other people like, I don't always agree with what other people say is--not just music--but insist is "good" music. I'm unabashedly a "romanticist": I love the piano concertos of Rachmaninov, and most of what Chopin wrote. I'm a Beethoven guy, but I have learned to like Bach, and there is some stuff that Mozart wrote that should be in the Hall of Fame of Music, compared to all music ever written.

But when it comes to Stravinsky, Schönberg, Alban Berg, or Karlheinz Stockhausen, I'm at a loss. I don't understand it. It doesn't sound like music to me.

Is it them, or is it me? Is there something about the music that the aforementioned composers wrote that is too complex for my brain? What is the complexity of music, anyway? Is it obvious that some music is just more complicated than some other music, and that it takes more sophisticated brains than mine to appreciate the postmodern kind of music?

I have to say that there is some evidence in favor of the position that, yes, my brain is just not sophisticated enough to appreciate Stockhausen. That I'm just not bright enough for Berg. Too rudimentary for Rautevaara. You get the drift.

The evidence is multifaceted. As a young lad, I just simply assumed that I was right in loathing all this "modern music" nonsense (even though I had rehearsed Britten's War Requiem as a 11-year old, a memory that I would only recover much later). I liked Beethoven, Rachmaninov, and Chopin. Then I saw a piece on TV that would change my perception of music forever. I saw a "Harvard Lecture" by Leonard Bernstein, a director and all-around musical genius that I admired (I was perhaps 18). It is one of the now famous "The Unanswered Question" Lectures of Bernstein (but I did not know that when I saw it.) Bernstein lectured about Schoenberg (as he spelled his name after he moved to the US). I can still remember my astonishment, as he took apart Schoenberg's "Verklärte Nacht" and lectured me about its structure, and pointed out the references to earlier classical music (sometimes inverted). I realized then and there that I had been extremely naive about music.

|

| Arnold Schoenberg, by Egon Schiele Source: Wikimedia |

That does not mean that I immediately came to like Schoenberg's music. I was still wondering whether anybody really liked it, as opposed to appreciate it on an intellectual level.

Then came the time when I was called to sing Stravinsky.

We are performing a formidable jump here, from my formative years to a period where I was a Full Professor at the Keck Graduate Institute, a university specializing in Applied Life Sciences in Claremont, California. They had a choir there, and as I had sung in a choir as an 11-year old (culminating in the aforementioned Britten episode that never resulted in a performance) I figured I'd get Mozart's Requiem off of my bucket list. I admit I'm a bit obsessed with this piece of music. I perhaps know way too much about it at this point. Anyway, the Claremont College Choir was going to perform it, so I signed up. (Well, you don't just sign up: you have to audition, and pass.)

During the audition, I was asked to sing some fairly atonal stuff. I had no inkling whatsoever that the choir director was testing me on Stravinsky's Symphony of Psalms. But after I was admitted to the choir, I learned that this was the piece we were going to perform alongside the Requiem.

I bought the CD, and listened to it on my daily commute from Pasadena to Claremont. At first, it sounded like cats were being drowned. I later asked people who were at the performance, and got similar reactions. I thought I could never never sing that. So I broke out Garageband or Logic Pro and wrote the bass track (that was the voice I was to sing) onto the music, so that I could rehearse it.

With practice, the unthinkable happened. I started to understand the music. I started to like it. I started to be moved by it. I slowly realized that this was great music, and that I was completely incapable to have realized this upon first hearing it.

This is, by the way, a dynamic that is not completely limited to music. Similar things can happen to you in the appreciation of mathematics. There is some mathematics that is utterly obvious. It is obviously beautiful, and everybody usually appreciates that beauty. Simple number theory, for example. The zeta function. But then I found that there was mathematics that I could not easily grasp. There is no beauty in mathematics that you do not understand. It may look like gibberish to you, as if the author just juxtaposed symbols with the intent to obfuscate. I still believe that there is mathematics out there that is just gibberish, but the beauty lies in those pieces that you learn to appreciate after "listening" to them for as long as it takes until you start to understand them.

So, our brains (certainly mine) are not exactly reliable judges of beauty. What is beauty anyway?

If you start to think about this question, you've got to take into consideration evolutionary forces. What we call "beauty", or "beautiful", is something that appeals to us, and there are plenty of reasons why we should be manipulated by something appealing. Countless prey have been lured into demise thusly. So what appeals to us?

Many many pages have been written about how our brain processes information, but for me, the most convincing narrative is due to Jeff Hawkins, entrepreneur and neuroscientist, who I have written about several times in this blog (perhaps most notably in its very first installment--and second--, immortalizing our very first meeting).

What Jeff taught me (first in his breathtaking book "On Intelligence", and later in person) is that our brains are primarily prediction machines. Our brains predict the future, and we love it when we are right. We hate it when we are not. Let me give you the example I learned from Jeff's book, and which I have repeated countless times.

Walking, you may think, is easy. Those who have tried to make robots walk will tell you it is not. How do we do it, then? It turns out that bipedal walking relies on a complex sensory-motor interplay, and this is not the post to dwell on its details. But we know from experiment the following: if you lose the sense of touch in your feet, your gait will be severely affected. Basically, you'll stumble, rather than walk. Why is this?

It turns out that while you are happily conversing with the person next to you while walking, your brain subconsciously makes hundreds of predictions about what your sensory systems will experience next. And when it comes to walking, it makes predictions about the exact timing of the impact of the ground with your feet. Your brain does this for every step (but of course you do not realize that, because of the "unconscious" part). And every time that your foot experiences the ground (via your feet's sensors) at precisely the predicted time, your brain (subconsciously) says "Aaaah."

Your brain likes it when its predictions are fulfilled. It is happy when anticipation is actualized. Because when all is as predicted, then all is well. And when all is well, our brain does not need to waste precious energy on attending to details, when important things have to be addressed.

But what if there is a rut in the road, or a lump in the lane? In those cases, the anticipated impact of the foot with the road will be delayed (rut) or early (lump), and our brain immediately springs to attention. The prediction was not realized, and our brain (correctly) interprets this as a harbinger of trouble. If my prediction was incorrect (so argues your brain) in this instance, it might be incorrect in the next, and this means that we need to pay close attention to the situation at hand. And so, reacting to this alert, you now inspect the path you are trodding with much more care, to learn about the imperfections you hitherto ignored, and to learn to anticipate those too.

This little example, I'm sure you see immediately, is emblematic of how our brain processes all sensory information, including visual, and for the purpose of this blog post, auditory, information.

According to this view, our brain is happiest if it can anticipate the next sounds. And, when it comes to music, this predisposition of our brain begins to explain a lot about how we process music. After all, the structure of repetitions in almost all forms (I should say, Western) forms of music is uncanny. We like repetition, and we (dare I say it) think it is beautiful. Not too much repetition, mind you. But it is now clear that we like repetition because it makes music predictable. This is also why, we now realize much more clearly, we begin to like music after we have heard it a couple of times. And yes, some music is so simple, so derivative, so instantly recognized that we also like it instantly, upon first hearing. But perhaps we can now also understand that this is not the kind of music that requires any artistry.

How much repetition is best? Is there an optimum that has just enough repetition that we can barely predict it (creating the happiness of correct prediction) and evades the boredom of the obvious repetition that does not tax us, but annoys instead? If this is true, shouldn't an evolutionary process be able to optimize it?

Yes, you can evolve music. You can do this yourself right now, by moseying over to evolectronica, where you can click on audio loops and rate them. The average rating will determine the number of offspring any of the tunes in the population will obtain (suitably mutated, of course). Good tunes will prosper in this world. I have nothing to do with this site, but I did write about a paper that studied how such loops evolve. That paper was published in the Proceedings of the US National Academy of Sciences (link here), and turns out to be an interesting application of evolutionary genetics. My commentary, highlighting the importance of epistasis between genetic traits, was published in the same journal, at this link.

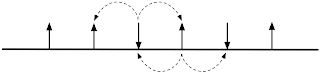

In the paper linked above, the authors use two traits to quantify the fitness of music: the "tonal clarity", and the 'rhythmic complexity". They find that while during early evolution the overall musical appeal of the tunes increases as each of these traits increase, the appeal then flattened out. During the time, neither clarity or appeal increased, seemingly holding each other back (see the qualitative rendition of the process in the figure below).

So what is good music? That, it appears, will always remain in the brain of the beholder, simply because different brains have different capacities to predict. Some of us will love the simplest of tunes because they stick with us immediately. Some others love the challenge of trying to understand a piece that even after one hundred listens cannot be whistled.

I've whistled Stravinsky's Symphony of Psalms, so anything is possible!

[1] R. M. MacCallum, M. Mauch, A. Burt, and A. M. Leroi, Evolution of music by public choice. Proc. Natl. Acad. Sci. USA 109 (2012) 12081-12086.

[2] C. Adami, Adaptive walks on the fitness landscape of music. Proc. Natl. Acad. Sci. USA 109 (2012) 11898–11899.

So, our brains (certainly mine) are not exactly reliable judges of beauty. What is beauty anyway?

If you start to think about this question, you've got to take into consideration evolutionary forces. What we call "beauty", or "beautiful", is something that appeals to us, and there are plenty of reasons why we should be manipulated by something appealing. Countless prey have been lured into demise thusly. So what appeals to us?

The answer to this is (at least this is my answer): "We like that which we can predict".

Many many pages have been written about how our brain processes information, but for me, the most convincing narrative is due to Jeff Hawkins, entrepreneur and neuroscientist, who I have written about several times in this blog (perhaps most notably in its very first installment--and second--, immortalizing our very first meeting).

What Jeff taught me (first in his breathtaking book "On Intelligence", and later in person) is that our brains are primarily prediction machines. Our brains predict the future, and we love it when we are right. We hate it when we are not. Let me give you the example I learned from Jeff's book, and which I have repeated countless times.

Walking, you may think, is easy. Those who have tried to make robots walk will tell you it is not. How do we do it, then? It turns out that bipedal walking relies on a complex sensory-motor interplay, and this is not the post to dwell on its details. But we know from experiment the following: if you lose the sense of touch in your feet, your gait will be severely affected. Basically, you'll stumble, rather than walk. Why is this?

It turns out that while you are happily conversing with the person next to you while walking, your brain subconsciously makes hundreds of predictions about what your sensory systems will experience next. And when it comes to walking, it makes predictions about the exact timing of the impact of the ground with your feet. Your brain does this for every step (but of course you do not realize that, because of the "unconscious" part). And every time that your foot experiences the ground (via your feet's sensors) at precisely the predicted time, your brain (subconsciously) says "Aaaah."

Your brain likes it when its predictions are fulfilled. It is happy when anticipation is actualized. Because when all is as predicted, then all is well. And when all is well, our brain does not need to waste precious energy on attending to details, when important things have to be addressed.

But what if there is a rut in the road, or a lump in the lane? In those cases, the anticipated impact of the foot with the road will be delayed (rut) or early (lump), and our brain immediately springs to attention. The prediction was not realized, and our brain (correctly) interprets this as a harbinger of trouble. If my prediction was incorrect (so argues your brain) in this instance, it might be incorrect in the next, and this means that we need to pay close attention to the situation at hand. And so, reacting to this alert, you now inspect the path you are trodding with much more care, to learn about the imperfections you hitherto ignored, and to learn to anticipate those too.

This little example, I'm sure you see immediately, is emblematic of how our brain processes all sensory information, including visual, and for the purpose of this blog post, auditory, information.

According to this view, our brain is happiest if it can anticipate the next sounds. And, when it comes to music, this predisposition of our brain begins to explain a lot about how we process music. After all, the structure of repetitions in almost all forms (I should say, Western) forms of music is uncanny. We like repetition, and we (dare I say it) think it is beautiful. Not too much repetition, mind you. But it is now clear that we like repetition because it makes music predictable. This is also why, we now realize much more clearly, we begin to like music after we have heard it a couple of times. And yes, some music is so simple, so derivative, so instantly recognized that we also like it instantly, upon first hearing. But perhaps we can now also understand that this is not the kind of music that requires any artistry.

How much repetition is best? Is there an optimum that has just enough repetition that we can barely predict it (creating the happiness of correct prediction) and evades the boredom of the obvious repetition that does not tax us, but annoys instead? If this is true, shouldn't an evolutionary process be able to optimize it?

Yes, you can evolve music. You can do this yourself right now, by moseying over to evolectronica, where you can click on audio loops and rate them. The average rating will determine the number of offspring any of the tunes in the population will obtain (suitably mutated, of course). Good tunes will prosper in this world. I have nothing to do with this site, but I did write about a paper that studied how such loops evolve. That paper was published in the Proceedings of the US National Academy of Sciences (link here), and turns out to be an interesting application of evolutionary genetics. My commentary, highlighting the importance of epistasis between genetic traits, was published in the same journal, at this link.

In the paper linked above, the authors use two traits to quantify the fitness of music: the "tonal clarity", and the 'rhythmic complexity". They find that while during early evolution the overall musical appeal of the tunes increases as each of these traits increase, the appeal then flattened out. During the time, neither clarity or appeal increased, seemingly holding each other back (see the qualitative rendition of the process in the figure below).

So what is good music? That, it appears, will always remain in the brain of the beholder, simply because different brains have different capacities to predict. Some of us will love the simplest of tunes because they stick with us immediately. Some others love the challenge of trying to understand a piece that even after one hundred listens cannot be whistled.

I've whistled Stravinsky's Symphony of Psalms, so anything is possible!

[1] R. M. MacCallum, M. Mauch, A. Burt, and A. M. Leroi, Evolution of music by public choice. Proc. Natl. Acad. Sci. USA 109 (2012) 12081-12086.

[2] C. Adami, Adaptive walks on the fitness landscape of music. Proc. Natl. Acad. Sci. USA 109 (2012) 11898–11899.